Sorry, I meant to come back to this much sooner. A lot of good stuff has already been discussed so far. My reply is going to be a bit long, so apologies in advance. What started out as a simple reply has morphed into a kind of full introduction to the problem for the uninitiated. If you've already written an emulator, there's a lot of stuff here you can probably skip, but for those who haven't I'm going to share a definition of the problem, to make sure anyone who might read this is on the same page, as I doubt anyone who hasn't tried to write an emulator really appreciates all the difficulties here. I don't think what I'm going to say will contain any earth shattering revelations, but I'll try and define the problem clearly, then cover the things I learned when working on Exodus.

In real hardware, the systems we're trying to emulate (usually) have multiple "active" devices, and they run in a truly parallel fashion. The timing of interactions that occur in this system are inherent. If you have two devices, both running off a shared/related clock source, both with fixed known timing charateristics, they'll interact in a predictable way. They may interact millions of times a second, with their operations interleaving in a consistent fashion. Emulating a single active device is relatively easy. Emulating multiple active devices at once, and getting absolutely every interaction between them in the right order with the correct relative timing is much, much harder.

It's worth noting at this point, that historically most emulators haven't really tried to solve this problem. Genecyst could run Mega Drive games at full speed on a Pentium 133. How could it do this? One reason was that, like most emulators after it, it didn't attempt to get perfect timing accuracy. Although a bit of a simplification, it would basically just say "run the 68000 for X amount of time, then run the Z80 for X amount of time, then run the 68000 for another X, etc". The timing accuracy was limited to saying the two devices are "within X of each other" at any point in time. As it happens, that's enough to get a lot of code working. Why? Because the timing requirements often depend on the actual code being run within the system you're emulating. You can write code that'll work even if the timing of the system is massively off. You can also write code that requires bleeding-edge timing cases to be emulated perfectly, or it all falls down in a massive heap. If you want to write an emulator that plays some (or even most) games on a given system with the apparent correct result as far as the player can see, and you don't care about more than that, you can do some really simple nieve things and get what you want. If you want to write an emulator that runs all code exactly and precisely in the same way as the original system, such that nobody can write any code that can behave differently on your emulator than the real hardware (intentionally or unintentionally), you have to go all in and fully emulate the timing interactions faithfully.

So that's the problem, and why we want to solve it. I've said emulating at this level is harder. It's harder for two main reasons:

1. Obtaining precise information on the timing of operations the devices performs is difficult, as the details are often not documented, or where they are the documentation is usually insufficient and/or incorrect.

2. The very nature of modern CPU architecture is ill-suited to running code which can emulate these systems at high speed.

Point 1 is well understood, and it can be solved over time with hardware testing and analysis. Point 2 is the issue being discussed here though. We want to be able to emulate a system as fast as possible, but trying to solve the timing problem creates challenges for performance.

Unfortunately, CPUs in general are poorly suited to truly concurrent work. A traditional processor wants to run a single stream of sequential instructions. Ideally, it wants that stream of instructions to spend most of its time in relatively the same areas of nicely co-located code, taking the same branches almost every time, working with data that sits close together in memory. That's what CPUs are great at doing at high speed, spinning around the same area of code, working with the same, or at least largely sequential, blocks of data. Where you start to stray from that ideal, you incur performance penalties. Now lets look at what's involved in emulating multiple active devices with perfect timing accuracy. We can't, on a single core, run code for more than one device at the same time. This means we need to interleave them, running (for example) one step from device A, then one from device B, then one from device C, then one from device A again and so on. For devices clocked in the MHz range, we might have to loop over this sequence millions of times a second in order to emulate a system in real-time. Unfortunately, this means we don't get to spend much time spinning around in a small pool of nicely co-located code working with the same data block. This means performance penalties (cache misses in particular). We also have extra insructions being run to compare the relative time of each active device, meaning less cycles are being burned actually emulating functionality. Our Genecyst example had the same kind of loop, but they ran hundreds of steps from each device before switching. This was better suited getting higher performance out of the CPU. We don't have that luxury, we need to interleave the steps at a much finer level, so we inherently take a performance hit here on our single threaded model.

Ok, so we take a performance hit, but does it matter in reality? It depends on the system you're emulating. Ideally, we'd have a single super-fast core (100GHz+ please) and run everything single threaded. That's the easiest option by far. We may still run slower than Genecyst on the same system, but if we can run anything 20 times faster than real-time, who cares right? While that's probably true, the problem is, we don't have a 100GHz core, and we can't expect one to appear anytime soon. Single-thread CPU performance pretty well flatlined 15 years back. We've hit physical limits that to date haven't been overcome, and gains year on year have been extremely modest in this area. If your system is simple enough, you might be able to get away with a single threaded approach for everything, but the more active devices you have and/or the smaller their individual "steps" in time (IE, how fast they're clocked), the more time you take. Adding more features (IE, debugger options) or more accurate emulation (IE, obscure edge cases) often doesn't come free either, and you take a bit of a performance hit, which can start to add up as byuu mentioned. Consider that the MegaDrive+MegaCD+32x combo has 5 cpus, 2 graphics chips, 3 sound chips, plus other specialist hardware. Also consider that it can easily take hundreds or thousands of cycles to emulate in software what can be done in one cycle by a specialist device in hardware. Now throw in trying to get that perfect timing accuracy, interleaving steps from each device millions of times a second. If you're trying to make all this run full speed on a 3-4GHz core single threaded, it's becoming a bit of a squeeze. The way I see it, there are two fundamental approaches to this problem:

1. Try and make a more efficient single-threaded (cooperative-threaded) model

2. Try and use a multi-threaded (preemptive-threaded) model

Byuu is shooting for option 1 here, and is having problems with getting everything done in real-time on one thread. I went for option 2 in Exodus, and had different problems, which I'll go into below. Ultimately I think if you want a model that scales to more complex systems like this, you really have no choice but to look at multi-threaded models, the trick is figuring out what an efficient multi-threaded model looks like for emulation purposes. I think you can combine the best points of both models into a kind of middle-ground, which is where I see the solution here, and I'll try and outline by the end of this post.

Now a discussion on multithreaded programming on modern CPUs in general. All modern CPUs have more than one core. It's worth noting, the only reason this is the case is because we stopped finding easy ways to make a single CPU core faster. Intel would be pumping out a single core 100GHz monster today if they knew how to build it, and we'd all be better off with it. Instead, we're stuck with multiple slower cores glued together. A multi-core CPU is well suited to running independent blocks of code, with independent blocks of data, in parallel with unknown/variable timing. Two cores can interact, however there's (relatively extreme) overhead for synchronization between the cores. Ideally two cores are occupied with completely separate non-related code and data. If you want to use multiple cores concurrently in a single program, the key to performance is to require interaction (IE, timing synchronization or data sharing) between those cores as little as possible, doing as much work as possible between interactions.

Now consider what we're trying to do in emulation and how we can make it multithreaded. First lets look at the obvious model that doesn't work. A nieve approach would be to say, take each "active" device, and run them in parallel with one device per core. Rather than that loop we talked about where we run "one step from device A, then one from device B, then one from device C", we instead create three threads, one for devices A, B, and C respectively. In those threads, we run steps for each device independently. Right away we have problems. How do we keep them in sync with each other? In the real hardware, the devices run concurrently, but the timing is fixed and inherent. With a multi-core CPU, we can (potentially) emulate the devices in parallel, but we can't reproduce the timing inherently, we need to engineer it in code. Each thread for each device therefore would need to check the execution progress of each other before executing each step, to ensure that no device goes ahead of another device. In that model, where you have N devices, you'd pontetially need to check the progress of N-1 devices each step, and block/wait on them until they'd caught up to where you are before advancing. As discussed above, modern CPUs are bad at doing this, they want you to synchronize between threads as little as possible, running large amounts of code between each interaction. We have the reverse, with millions of interactions per second, and (usually) small amounts of code between each interaction. The CPU doesn't like this. This approach is very slow, and it actually gets worse the more devices you add. You're going to run significantly slower in this model than you would have using the simple single-threaded model we started with.

So what do we do? At this point, it's worth recognizing that the thing that hurts performance most for both our single-threaded or our multi-threaded models is the same: synchronization. Every time we need to switch to or wait for another device, it hurts performance. Our primary goal in either case then is to minimize the points at which we need to synchronize with other executing devices. To do this, we need to take a closer look at when synchronization is actually required, so we can try and minimize the points at which we bring things in sync with each other. To detail this, I define a few "types" of dependencies between devices:

Two-way dependencies:

A two-way dependency is where two active devices need to be kept in perfect sync. These kind of dependencies really suck for emulation, but there's not much you can do about them. In the Mega Drive for example, the 68000 and Z80 can both take ownership over each other's buses, suspending the other CPU until the operation is complete. This is done simply by trying to read or write sections of memory. In both cases, these events occur unpredictably without the involvement of the suspended CPU. This is an example of a worst-case unsolveable two-way dependency. You can never run the 68000 ahead of the Z80, as if the Z80 attempts to access the 68000 bus, but you've already advanced the 68000 a few clock cycles ahead and it's gone ahead and performed a bus operation that never should have occurred as it was supposed to be suspended, you've already advanced with incorrect timing. The same is true in reverse, if the Z80 is further ahead then the 68000 takes bus ownership, you'll already be off with your timing. The only real solution I know of here is to run the devices in lock-step, synchronizing timing after each indivisible execution step where external changes are either made or become visible which can affect the execution of the device. In Exodus currently, where a two-way dependency like this exists, I advance both devices together on a single thread in lock step, IE, I fall back to a single-threaded execution model between these devices.

One-way dependencies:

These are better. With a one-way dependency, a given device is like a "slave" of another. In this scenario, it should be possible to advance the "master" device much further ahead, and only synchronize when it performs an operation where the slave could affect the result. The sub-CPU in the MegaCD is an example of this I believe. To my understanding, there's nothing the sub-CPU can do which will have any effect on the main 68000, until the main 68000 attempts itself to access memory or shared registers to which the sub-CPU has access. In this case, you can afford to run the main 68000 significantly ahead of the sub-cpu, however the sub-cpu still needs to ensure it never advances beyond on the main 68000 in this case, as the main 68000 is capable of doing things like obtaining bus ownership that directly affect the sub-cpu. Depending on the code, these kind of interactions may occur frequently or not at all, but in either case you shouldn't have to synchronize nearly as much as you would with a two-way dependency. This is a case where you should be able to safely let the main CPU run thousands of cycles ahead, then bring the sub-cpu forward thousands of cycles too in one go. This kind of dependency allows you to use two different execution threads for the two devices, but depending on the code being run, it may not perform any better, or may perform more slowly in extreme circumstances.

Shared passive device dependencies:

These are good ones, easy to manage. An example might be dual-port RAM, or some kind of specialist device that is mapped into multiple buses. If multiple devices can interact with this passive device (IE, by reading or writing particular addresses), but they don't know or care what the device is doing unless they "check" on it by accessing its mapped memory range or the like, it's a passive dependency. You don't need any synchronization at all if only one device can access this kind of passive device at a time independently (IE, if there's only one bus), but if a second device can also interact with it, but there's no unsolicited access, it's enough to simply synchronize the connected devices when they attempt to access the shared passive device, IE, by making sure other devices are up to or ahead of the point in time when the access is being made.

Interrupt dependencies:

These need a special note, as there's some important considerations around them. In the Mega Drive, both the VDP and YM2612 can trigger interrupts. Without these interrupts, the YM2612 and VDP (ignoring DMA for a second) are effectively passive devices. They don't make unsolicited interactions with other devices in the system, they just do their own thing and produce their own private, closed output (analog audio and video in particular). Interrupts are the exception though, they're raised by these otherwise passive devices and directly affect the subsequent operation of other devices. They are however, very predictable. In both cases, these interrupts are effectively raised on timers. Without any subsequent external state changes, given the current internal state of the VDP and YM2612, you can predict precisely when the next interrupts will be raised. In Exodus, I actually signal future interrupts ahead of time. The VDP actually flags a "future" interrupt to the 68000 if one can occur based on the current state (IE, the next VINT), and the YM2612 will do the same for the Z80. When the 68000 and Z80 execute, they "see" these pending interrupts in a buffer, but they ignore them until they reach that point in time during their execution. If the internal state of the VDP or YM2612 is changed prior to the time those interrupts would have been raised, and that affects whether that interrupt would occur, my execution model allows these "future" interrupts to be revoked, possibly with updated future interrupts then issued. This model allows me to treat the VDP and YM2612 as purely passive devices, even though they can raise interrupts, as the devices affected by them know what they will do before they do it. This means the 68000 never has to synchronize with the VDP at all unless it's directly interacting with it, and the same is true with the Z80 and the YM2612. Although I've focused on interrupts here, bus requests or any other kind of interaction can use the same approach, as long as the future access is predictable in advance. This allows me to handle VDP external DMA operations in a similar manner.

Private output:

This is important, because it's a note about what isn't a dependency at all. I'm going to focus on the PSG. Let's look at what the PSG actually involves in terms of interaction. It has a set of internal write-only registers, no interrupts, and no observable output from the CPU perspective at all. If you remove it entirely, the CPU wouldn't know, as long as the memory write doesn't fail. Of course, changing PSG registers affects the sound output, but does that mean you need to block, for example, the 68000 CPU emulation while you generate output samples for the PSG? No, it doesn't. You should never have to synchronize with the PSG, as long as you entirely separate the task of producing the sound output. Instead of generating audio samples on port writes, have a separate worker thread for the PSG that's independent of the main execution thread(s) for the system. Keep a buffer which records register state changes and the time they occur, where register writes during system execution simply add entries to this buffer. At a time of your choosing, you dispatch an accumulated block of time which you've finished executing to the worker thread, which then generates the actual output samples, while applying the accumulated register state changes at the appropriate time. If you design this kind of buffer well, it can not only be lock free, but also almost entirely memory allocation and memory barrier free too, giving minimum overhead.

On the same issue, lets now look at the YM2612. It has interrupts, and it has readable output. This requires at least some synchronization to be performed if the status register is read. Does it require the entire analog audio output to be produced though? No, although some obscure test register modes might add some requirements there, in general it just requires the "busy" flag and timer overflow flags to be correct. Now lets look at the VDP. It does have an external DMA mode, where it can take bus ownership and perform external reads itself. This however only occurs on demand, in direct response to register writes. It does have a readable status register, and readable data too. These will certainly require a level of processing to be performed in order to calculate the correct results. Do we need to actually produce the full video output in order to calculate this information though? No, we don't. You can use the same technique of buffering register changes and the time they occur, and perform the actual output generation in a separate worker thread, that "floats" independently from the execution process, and has minimum synchronization requirements.

Getting to the key point summary now, I think there are two main points to maximizing performance for emulation on complex systems:

1. Minimize synchronization

2. Push work off the critical path

On point 1, I outlined some tips above around recognizing some common types of dependencies, and thinking about how to limit when you synchronize devices. You want to go fine-grained with this, and look at everything that can be read, written, or triggered when interacting with other devices. How/when/what you synchronize may vary based on specific addresses that are written, and even specific data that's being written where the data affects the subsequent operations. If you reason about the results, you can identify the weakest possible level of synchronization that's required to still guarantee perfect timing. This is what you want to aim for. The cooperative-threading model byuu uses is a great example of this (Sidenote: do you think the upcoming C++ coroutine work has application to this issue in your model byuu?). A spin on this model can also be used in a multithreaded model, where some devices may be time-sharing on the same thread, and some may be running independently on another thread. Anything that shaves time off synchronization is a good thing.

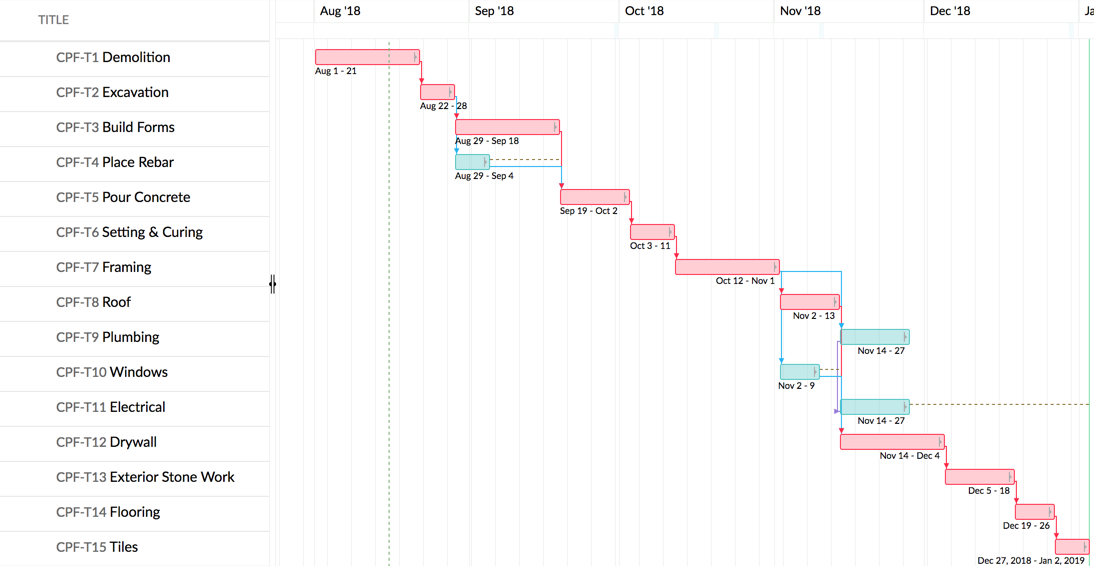

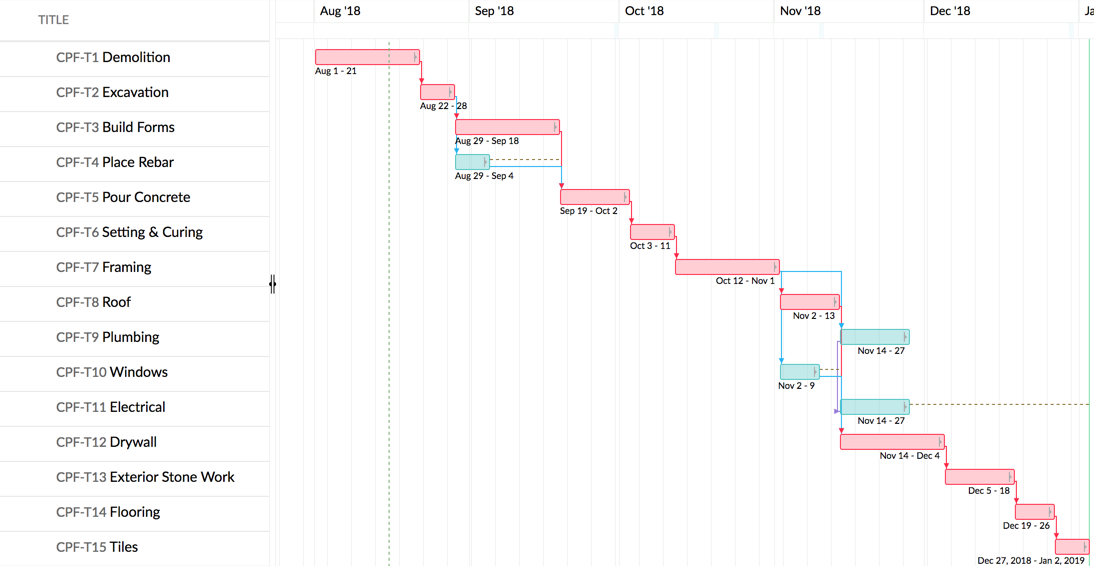

For point 2, pushing work off the critical path, I'll speak a bit more about it. The critical path is a simple concept, and it has essentially the same meaning/implications in both scheduling and multithreaded programming. Here's a gantt chart I've shamelessley ripped for illustration purposes:

Those items in red are our critical path, things that need to happen sequentially and together form a chain that takes the longest time. In the case of the Mega Drive, that 68000/Z80 combo is going to be on your critical path (unless perhaps you're doing a dynarec or some other kind of reinterpretation/HLE, but I don't know that those techniques combine well with more precise, timing accurate emulation). The speed at which you can emulate those two devices together with perfect timing accuracy is what will be the biggest decider in how fast your emulator runs. It's important to note that, with multiple cores available, it's actually possible to take more cycles (longer CPU time), and make your code theoretically slower, but if it saves time on your critical path it can result in your emulator running faster in the real world (wall clock time). This is because idle CPU cores are effectively a wasted resource. If you can make use of them in otherwise dead time while you're waiting for your critical path items to complete, it's effectively free processing. Your goal is not only to optimize the tasks on the critical path to run faster, it's also to shift as much work off the critical path to background threads as possible. Minimizing synchonisation points, such as through the cooperative-threaded scheduler byuu has employed, optimizes the critical path. My example above of buffering register writes to the PSG and generating samples on a background thread, pushes work off the critical path. In my view, the best model is to combine both techniques.

Exodus does have another lever it can pull, which is a bit more extreme. I built Exodus to support "rollbacks", which allows the system to actually get execution wrong the first time around, roll back to an earlier state, then execute again with advance knowledge about future ordering/timing requirements. This isn't something you want to rely on for normal execution as it results in major performance penalties, since all the work you throw away is dead time, and there's some overhead for implementing it. I use it mostly to support debug features, such as breakpoints, watchpoints, or other conditions which actually want to halt the emulation environment. In these cases the wasted time isn't important as we're stopping anyway, and it allows me to ensure that the time when we want to stop is known, so no devices advance past this point and everything can be perfectly in sync when the system stops. The same feature could also be used to handle really obscure edge-case timing issues, which are unlikely (or never) occur in normal games released for the system, such as crazy test register features, but would be difficult to support or incur significant performance penalties to emulate even if they're not used. Triggering a rollback in these cases might be preferable for simplicity, but generally speaking you would want to try and avoid relying on rollbacks for normal execution, or performance can become very uneven depending on the code being run on the system.

I've just written a novel, so I think I'll leave it there. Hopefully this is useful to someone. Happy to answer questions and listen to feedback/suggestions.